This article is part of a series about setting up a home server. See this article for further details.

Most of this can actually be achieved with the GUI these days, RedHat’s disk utility has improved a lot since the version that was included with karmic. To load it go to Settings > Administration > Disk Utility.

Make sure all the disks to be used have no partitions on them, then go to Create > Raid array.

512KiB was the default stripe size and is what I used initially, but I later switched to a 128KiB stripe. The example below uses a 512KiB stripe.

Also, it is a good idea to reduce the size to 128mb or so below the max capacity. Drives of the same advertised capacity can vary slightly in actual size, and if you replace a disk you don’t want the rebuild to fail because the drive is a few megabytes too small.

After selecting the disk members and pressing create the array will be in a “degraded” state until all the disks are synchronised. The amount of time it takes depends on the size of the array, but I’d suggest letting it finish before proceeding, I received errors in disk utility if I tried to create a partition too soon.

Also note that I’ve used the GPT partitioning scheme. GPT is designed to replace the old MBR scheme which has some limitations and can be restrictive these days, so I elected to use GPT. MBR is a safer option if your array is less than 2TB. If you use new hard drives with 4096-byte sectors such as Western Digital “advanced format” drives, you should use GPT. The use of GPT means that fdisk cannot be used, because it doesn’t support it. In its place we use parted and gdisk (“aptitude install gdisk” if you don’t yet have it).

Creating a partition on the array

The only slightly tricky part is creating a partition that is aligned with the raid stripe. You can’t create a partition starting at sector 0 because that’s where the partition table lives, so disk utilities will always offset the start of the first partition. However in order to get the best performance you need to align the partitions with the stripe size.

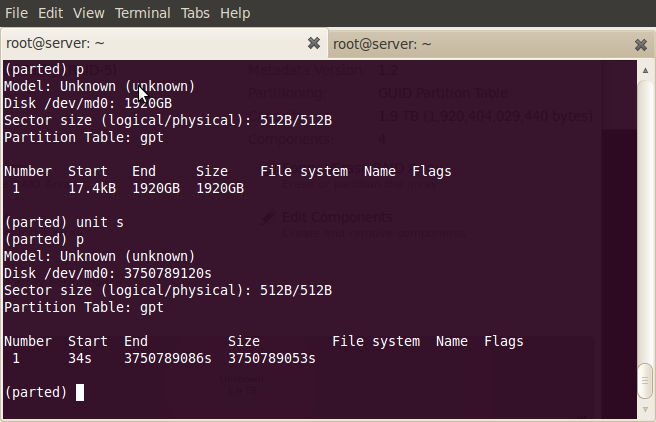

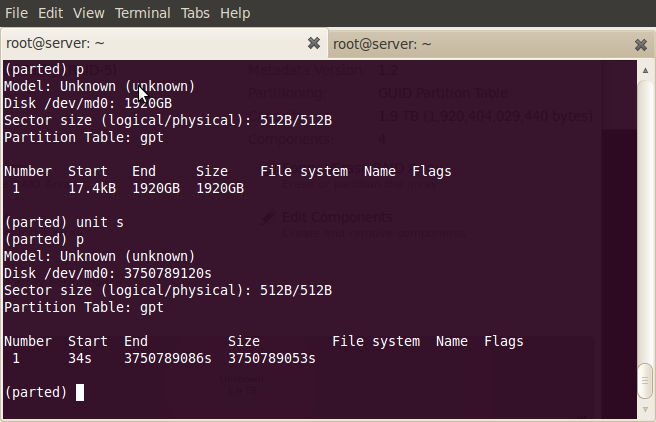

After creating a partition with disk utility I was greeted with the following:

![Screenshot-Raid5 (1.9 TB RAID-5 Array) [-dev-md0] — Disk Utility](https://blog.al4.co.nz/wp-content/uploads/2010/05/screenshot-raid5-1-9-tb-raid-5-array-dev-md0-e28094-disk-utility.png)

It appears that disk utility isn’t quite intelligent enough to create properly aligned partitions on its own just yet.

In my case the partition was offset by 17.4KiB, which doesn’t align with the stripe size of 512KiB:

I found the easiest way to get a properly aligned partition was to create a partition in disk utility with no file system, and note the offset given to you by the warning message that appears after the partition has been created. Then, simply delete the partition and use gdisk to create the partition with the original offset plus the figure given to you in the warning.

To get the existing offset, run “parted /dev/md0”, type “unit b” to switch the working units to bytes and type p to print a list of partitions on the volume.

In my example the original offset was 17408 bytes (34 sectors * 512 bytes/sector), and the partition was out of alignment by 506880 bytes. This means it should actually be at byte 524288, which also happens to match the stripe size of 512KiB.

gdisk works in sectors however, so we need to divide the result by 512, giving sector 1024 (524288/512=1024). So in this example you would run gdisk, type n to create a new partition, enter 1024 for the first sector, and accept the default for the last. For the current type I used code 0700, which is “Linux/Windows data”.

The second time around I used a stripe size of 128KiB (it seemed more “normal”), and with this stripe you would offset the start of the partition by 256 sectors (131072 bytes). I don’t believe the stripe size matters much – larger stripe sizes probably perform marginally better with larger files but I wanted something more general purpose as there will be a lot of small documents as well as large photos.

An example of creating a partition in gdisk follows. Note that I’m using /dev/null in this example to avoid destroying my laptop, you would probably want to use the actual blank raid array, which is likely to be /dev/md0. You can delete partitions with gdisk (with d), but for the newbies it’s probably easier to delete any previous attempts in the gui disk utility first.

sudo gdisk /dev/null

GPT fdisk (gdisk) version 0.5.1

Command (? for help): n

Partition number (1-128, default 1): 1

First sector (34-18446744073709551582, default = 34) or {+-}size{KMGT}: 256

Last sector (256-18446744073709551582, default = 18446744073709551582) or {+-}size{KMGT}:

Current type is 'Unused entry'

Hex code (L to show codes, 0 to enter raw code): 0700

Changed system type of partition to 'Linux/Windows data'

Command (? for help): w

Final checks completed. About to write GPT data. THIS WILL OVERWRITE EXISTING

MBR PARTITION!! THIS PROGRAM IS BETA QUALITY AT BEST. IF YOU LOSE ALL YOUR

DATA, YOU HAVE ONLY YOURSELF TO BLAME IF YOU ANSWER ‘Y’ BELOW!

Do you want to proceed, possibly destroying your data? (Y/N) Y

OK; writing new GPT table.

The operation has completed successfully

Creating the file system

Next create the file system on the partition:

mkfs.ext4 /dev/md0p1

I haven’t yet investigated tuning an ext4 partition for raid 5 arrays. There are probably some tweaks to be made here, please comment if you have a suggestion.

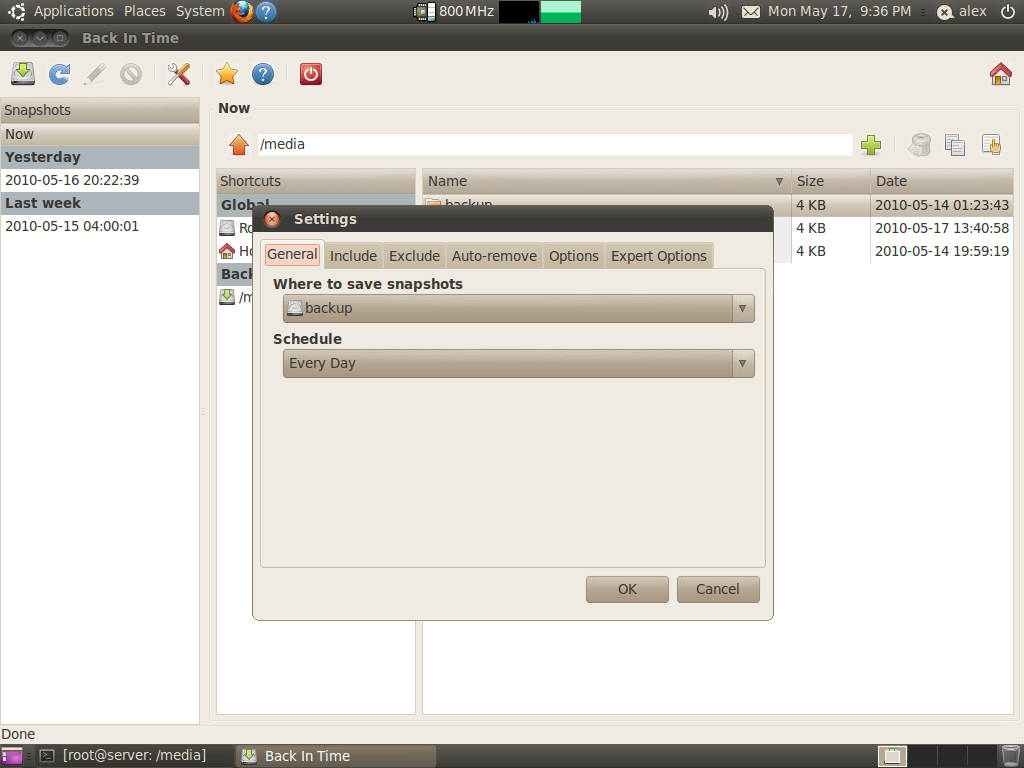

Autostarting and mounting the array

At this point you should have a working raid array, shown as running in disk utility and an ext4 partition on the disk. If you reboot you will notice that the array doesn’t start automatically, you have to go into the disk utility and start it manually each time.

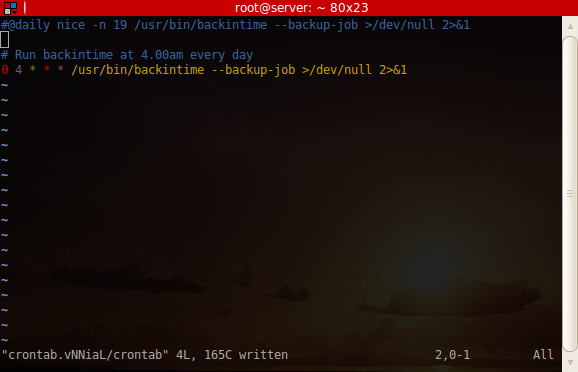

Getting it to start by itself also requires falling back to the command line. Make the array is running before proceeding.

First we need to get the config line:

mdadm --detail --scan

You should get a line like the following:

ARRAY /dev/md0 level=raid5 num-devices=4 metadata=01.02 name=:Raid5 UUID=7198dc4b:0b61431d:99f71126:c2d41815

Paste this line into /etc/mdadm/mdadm.conf, below the line that says “definitions of existing MD arrays”. Reboot, load disk utility and you should see that your array has been started automatically.

Automounting the file system on the array simply involves putting a line in /etc/fstab, which unfortunately every technical Linux user still needs to know about (it seems to be the most prominent legacy hangover, but it’s still a pretty good system when you know how it works). My line is as follows:

UUID=47b4d934-c0c3-46c6-b9f7-09c1c7a94774 /media/data ext4 rw,nosuid,nodev 0 1

Note that the UUID here is the UUID of the partition and not the raid array. To get the UUID of your partition, use the command “sudo blkid” and note the UUID of the partition on your raid array, which should be /dev/md0.

Another useful command is “cat /proc/mdstat” which gives some info about active arrays.

You shouldn’t need to create the mount point in /media, in my experience this happens automatically.

Next part – Creating user accounts and setting up the file shares

![Screenshot-Raid5 (1.9 TB RAID-5 Array) [-dev-md0] — Disk Utility](https://blog.al4.co.nz/wp-content/uploads/2010/05/screenshot-raid5-1-9-tb-raid-5-array-dev-md0-e28094-disk-utility.png)